Do you come often across the “context deadline error” in Kubernetes? Resolving it can be complex. But with a few easy and streamlined steps, you can easily fix it.

What is Kubernetes?

Kubernetes (“kube” or K8) is a well-known open-source platform for container orchestration. It helps in successfully automating a wide range of manual processes. These processes are concerned with the deployment, management, and scaling of containerized apps.

What does Kubernetes “context deadline exceeded” error mean?

Kubernetes jargon can often put you into confusion. While Kubernetes has various benefits for DevOps workflow, you may end up facing several configuration errors and other problems.

One such error that may appear in this container platform is “context deadline exceeded.” What is this error all about? As the name suggests, it indicates that you have failed to complete the given action in the stipulated time.

The context golang package helps in easily passing deadline, cancellation signal, and request-scoped values across the API boundaries. For deadlines, if the request is not handled timely, it leads to “context deadline exceeded” error.

When does this error occur?

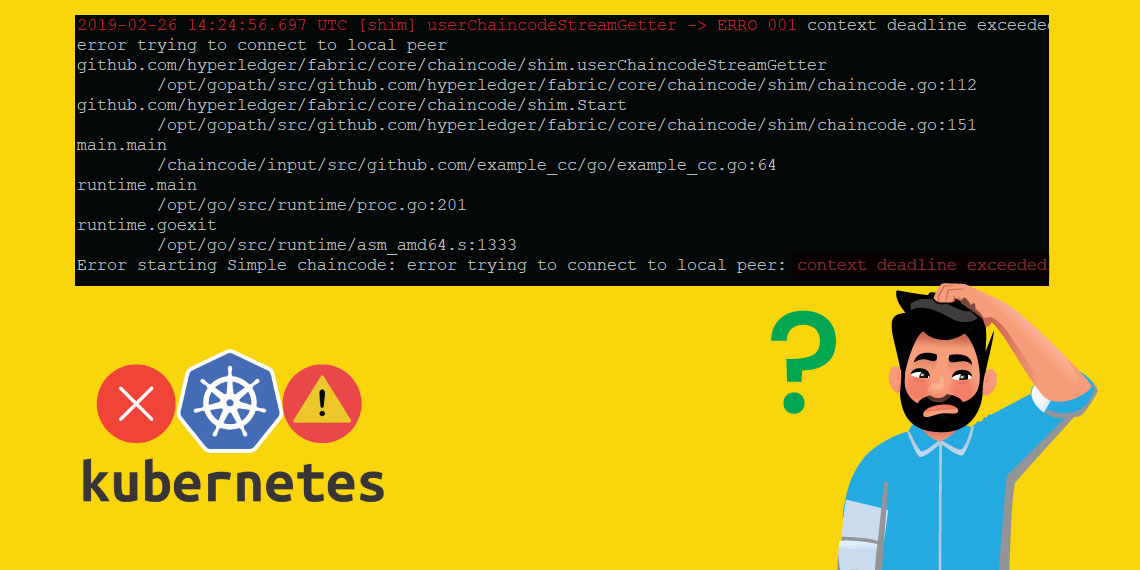

You expect your Kubernetes Pod to deploy and terminate seamlessly. But on facing the “context deadline exceeded” Kubernetes error, your pod gets stuck, in the ‘Init’ status. Generally, the error that appears looks like this:

- Warning FailedCreatePodSandBox 93s (x8 over 29m) kubelet, 97011e0a-f47c-4673-ace7-d6f74cde9934 Failed to create pod sandbox: rpc error: code = DeadlineExceeded desc = context deadline exceeded Normal SandboxChanged 92s (x8 over 29m) kubelet, 97011e0a-f47c-4673-ace7-d6f74cde9934 Pod sandbox changed, it will be killed and re-created.

Kubelet.stderr.log will also help you see the container platform errors.

Also, you can see the status – node-agent-hyperbus. You can run the given nsxcli command as a root user, right from its node.

- Sudo -i

- /var/vcap/jobs/nsx-node-agent/bin/nsxcli

- “at the nsx-cli prompt, enter”: get node-agent-hyperbus status

The output you can expect:

- HyperBus status: Healthy

The given error will appear in this particular situation as;

- % An internal error occurred

The DEL requests for the delete function will appear in a loop. The requests are sent to the process of nsx-node-agent. Once you restart this process, it will help you tackle the error.

For accessing the worker node, you need to use the command, “bosh ssh.”

It Is then you can operate the given command:

- Sudo -i

- Monit restart nsx-node-agent

Once the restarting of the nsx-node-agent happens, it will help you eliminate the error.